Proposition. The adjacency matrix of a graph is a real symmetric matrix.

Probability of adjacent connection between $R_j$ and $C_j$, $P(R_j\sim C_j)=\frac{1}{2}$, $\ P(R_j\not\sim C_j)=\frac{1}{2}$ $$ P(A(G)\ is \ invertible)=P(M\ is\ invertible) $$ **Thm Let G be a graph. The number of walks from $v_i$ to $v_j$ of length k is equal to the $(i,j)$-entry of $(A(G))^k$** --- **Proof** Let $A(G)=(a_{ij})_{n\times n}$. Then $(i,j)$-entry of $(A(G))^k$ is given by $$ [(A(G))^k]_{ij}=\sum a_{ii_1}a_{i_1i_2}\cdots a_{i_{k-1}j} $$ where the sum ranges over all sequences $(i_1,i_2,\dots,i_{k-1})$ with $i_t\in[n]=\{1,2,\dots,n\}$ $$ \begin{aligned} (A(G))^2_{ij}&=(A(G)\cdot A(G))_{ij}=\sum_{i_1=1}^na_{ii_1}a_{i_1j}\\ (A(G))^3_{ij}&=((A(G))^2\cdot A(G))_{ij}=\sum_{i_2=1}^n(\sum_{i_1=1}^na_{ii_1}a_{i_1i_2})a_{i_2j}=\sum_{i_1,i_2=1}^{n}a_{ii_1}a_{i_1i_2}a_{i_2j}\\ \end{aligned} $$ By the definition of adjacency matrix, $a_j=1\Leftrightarrow v_iv_j\in E(G)$ It follows that the summand $a_{ii_1}a_{i_1i_2}\cdots a_{i_{k-1}j}$ is 1 $\Leftrightarrow\ v_iv_{i_1}v_{i_2}\cdots v_{i_{k-1}}v_j$ is a walk of length k. Hence the summing over all $(i_1,\cdots,i_{k-1})$ just gives the total number of walks of length k from $v_i$ to $v_j$ --- A(G) is $n\times n$ symmetric matrix. All n eigenvalues of A(G) are real. Assume the n eigenvalues satisfy $$ \lambda_1\geq\lambda_2\geq\cdots\geq\lambda_n $$ Note that $\lambda_1+\lambda_2+\cdots+\lambda_n=0$,some eigenvalues could be negative.

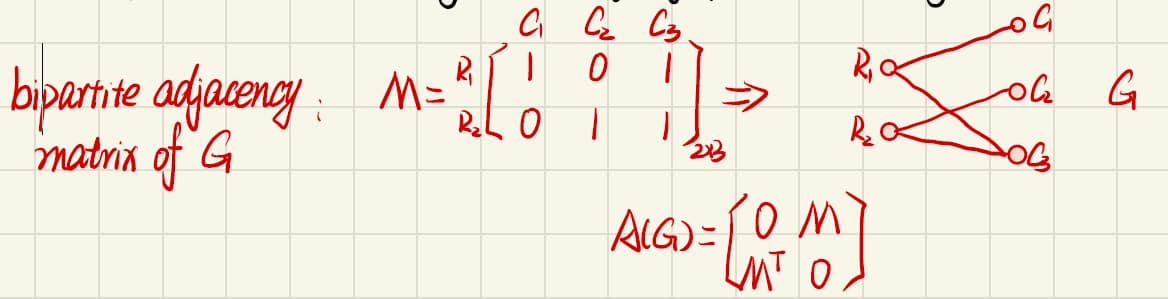

If G is a bipartite graph, the eigenvalues are symmetric about origin.

Thm Let G be a graph with eigenvalues $\lambda_1,\cdots,\lambda_n$. Then the number of closed walks in G of length k is given by

Proof

Let G be a graph with adjacency matrix A(G). Then it follows from the above theorem that

Since A(G) has $\lambda_i$ as an eigenvalue with $i\in{1,2,\cdots,n}$, the matrix $A(G)^k$ has eigenvalue $\lambda_i^k$for $i\in{1,2,\cdots,n}$

$A(G)\vec{v_i}=\lambda_i\vec{v_i},\quad A(G)^k\vec{v_i}=A(G)^{k-1}A(G)\vec{v_i}=A(G)^{k-1}\lambda\vec{v_i}=\lambda_i^k\vec{v_i}$Then, $tr(A(G)^k)=\sum{i=1}^n\lambda_i^k$, so $w_G(k)=tr(A(G)^k)=\sum{i=1}^n\lambda_i^k$

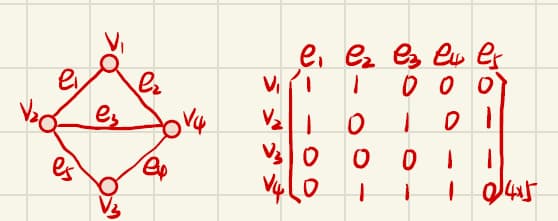

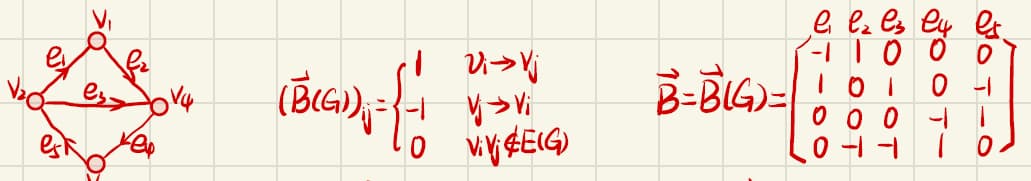

Incidence matrix and Laplacian matrix

Let G be a graph s.t. $V(G)={v_1,v_2,\cdots,v_n}$ and $E(G)={e_1,e_2,\cdots,e_m}$

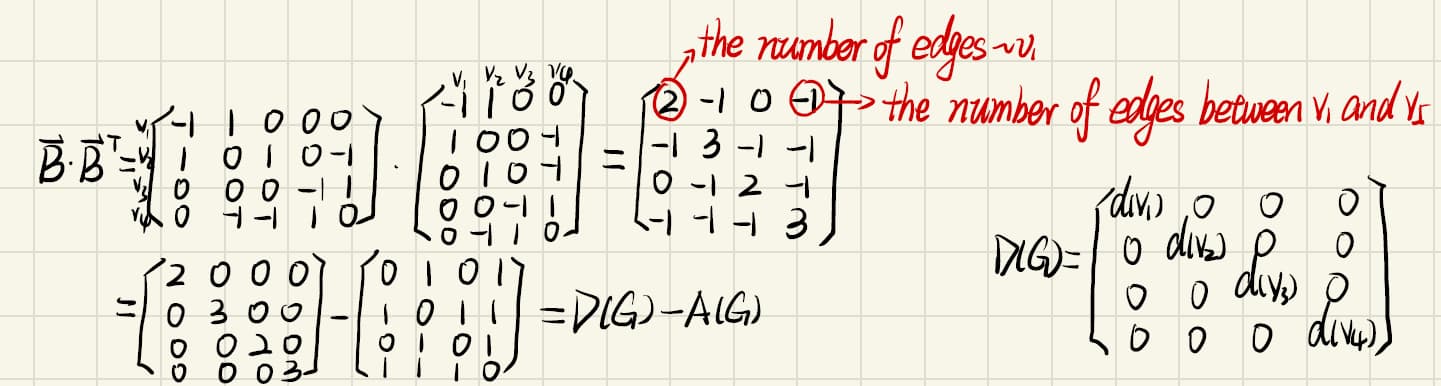

$D(G)$ is a diagonal matrix whose diagonal entries are the degrees of vertices.

Laplacian matrix of G: $L(G)= D(G)-A(G)=\vec{B}\cdot\vec{B}^T$

Proposition. Let G be a graph. Then L(G) is positive semi-definite and consequently, all eigenvalues of L(G) are real and non-negative.

$\tau(G)$= the total number of spanning trees in G.

Thm(Matrix Tree Theorem) Let G be a graph with vertices $v_1,\dots,v_n$. Then $\tau(G)=det(L_0(G))$ where $L_0(G)$ is obtained from $L(G)$ by deleting the i-th row and i-th column for any $i\in[n]={1,2,\dots,n}$

Proof.

If T is a spanning tree, either $e\in T$ or $e\not\in T$.

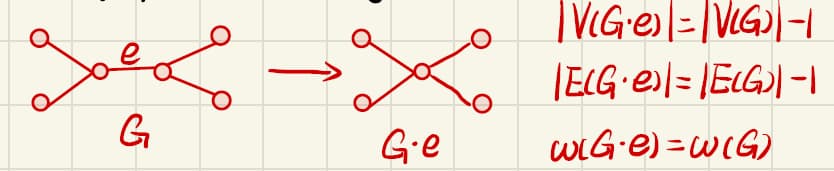

An edge e of G is said to be contracted if it is deleted and its ends are identified, the resulting graph is denoted by $G\cdot e$.

If $e\not\in T$, T is a spanning tree of G-e; if $e\in T$, $T\cdot e$ is a spanning tree of $G\cdot e$.

Therefore, $\tau(G)=\tau(G-e)+\tau(G\cdot e)$

Use induction on the number of edges of G.

If G has no edges and n=1, so $\tau(G)=1=det(L_0(G))$ where $L_0(G)$ is a $0\times 0$ matrix with determinant 1 by convenience.

If $n>1$, then $\tau(G)=0$, and $L_0(G)$ is a 0-matrix in which every entry is 0. So $\tau(G)=det(L_0(G))$ .

Therefore, the statement holds for $|E(G)|=0$.

Assume the statement holds all graph with number of edges less than $|E(G)|$.

By recordering the vertices of G, we may assume that $i=1$ and $v_1v_2\in E(G)$, Let $e=v_1v_2$. Then $\tau(G)=\tau(G-e)+\tau(G\cdot e)$.

By inductive hypothesis, $\tau(G-e)=det(L_0(G-e))$ and $\tau(G\cdot e)=det(L_0(G\cdot e))$.

Assume $L_0(G)=\begin{pmatrix}d_2&P\P^T&R\end{pmatrix}$ obtained from $L(G)=\left(\begin{array}{c:c:c}d_1&1&\cdots\\hdashline1&d_2&P\\hdashline\vdots&P^T&R\end{array}\right)$ by deleting the first row and the first column.

Then $L_0(G-e)=\begin{pmatrix}d_2-1&P\P^T&R\end{pmatrix}$ and $L_0(G\cdot e)=R$

Note that $L_0(G)=L_0(G-e)+\begin{pmatrix}1&\vec{0}\\vec{0}^T&R\end{pmatrix}$

Using the Laplace expansion along the first row,

Thm Let G be a connected graph with n vertices. Suppose that the eigenvalues of L(G) are $\mu1\geq\mu_2\geq\cdots\geq\mu{n-1}\geq\mu_n=0$. Then

Proof

Let $L(G)$ be the Laplacian matrix and let

So the cofficient of $\lambda$ term is $-\mu1\mu_2\cdots\mu{n-1}$. Note that, consider $L(G)-\lambda I$.

Add all rows of $L(G)-\lambda I$ except the first row to the first row, and let $M(\lambda)$ be the new resulting matrix.

Then $det(M(\lambda))=det(L(G)-\lambda I)$ and the first row of $M(\lambda)=[-\lambda,-\lambda,\cdots,-\lambda]$.

Let $N(\lambda)$ be the resulting matrix obtained from $M(\lambda)$ by factoring out $-\lambda$.

Then $M(\lambda)=-\lambda N(\lambda)$ and $det(M(\lambda))=-\lambda det(N(\lambda))$, So

Therefore, the cofficient of $\lambda$ term in $det(L(G)-\lambda I)$is equal to $-det(N(0))$.

Add all columns of $N(0)$ except the first column to the first column.

(Note that $i^{th}$-row $(i>1)$ of $N(0)$ is the same as the $i^{th}$-row $(i>1)$ of $L(G)$Then the first column of the new matrix is $\left[\begin{matrix}n&0&0&\cdots&0\end{matrix}\right]^T$.

Use Laplace expansion along the $1^{st}$ column, $det(N(0))=n\cdot det(L_0(G))$

By previous theorem,